Filter News

Area of Research

News Topics

- (-) Exascale Computing (15)

- (-) Fusion (9)

- 3-D Printing/Advanced Manufacturing (20)

- Advanced Reactors (3)

- Artificial Intelligence (26)

- Big Data (10)

- Bioenergy (22)

- Biology (29)

- Biomedical (7)

- Biotechnology (6)

- Buildings (14)

- Chemical Sciences (24)

- Clean Water (5)

- Climate Change (31)

- Composites (6)

- Computer Science (23)

- Coronavirus (4)

- Critical Materials (6)

- Cybersecurity (9)

- Decarbonization (30)

- Education (3)

- Emergency (1)

- Energy Storage (21)

- Environment (43)

- Fossil Energy (2)

- Frontier (19)

- Grid (16)

- High-Performance Computing (33)

- Hydropower (3)

- Irradiation (2)

- Isotopes (11)

- Machine Learning (15)

- Materials (59)

- Materials Science (16)

- Mathematics (2)

- Mercury (2)

- Microelectronics (2)

- Microscopy (7)

- Molten Salt (1)

- Nanotechnology (7)

- National Security (21)

- Net Zero (5)

- Neutron Science (32)

- Nuclear Energy (21)

- Partnerships (24)

- Physics (14)

- Polymers (4)

- Quantum Computing (12)

- Quantum Science (9)

- Renewable Energy (2)

- Security (3)

- Simulation (29)

- Software (1)

- Space Exploration (4)

- Summit (9)

- Sustainable Energy (17)

- Transportation (18)

Media Contacts

Outside the high-performance computing, or HPC, community, exascale may seem more like fodder for science fiction than a powerful tool for scientific research. Yet, when seen through the lens of real-world applications, exascale computing goes from ethereal concept to tangible reality with exceptional benefits.

Wildfires have shaped the environment for millennia, but they are increasing in frequency, range and intensity in response to a hotter climate. The phenomenon is being incorporated into high-resolution simulations of the Earth’s climate by scientists at the Department of Energy’s Oak Ridge National Laboratory, with a mission to better understand and predict environmental change.

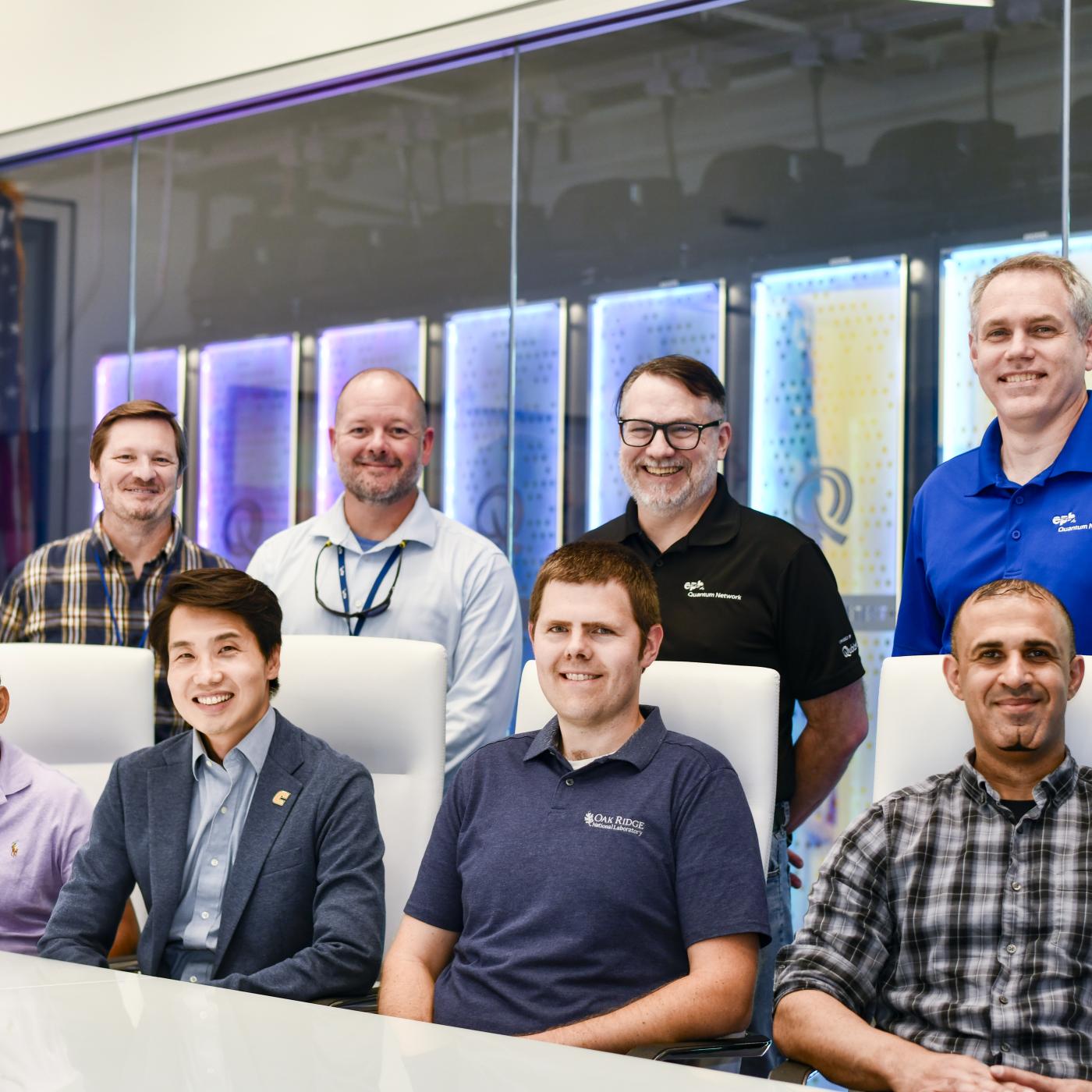

With the world’s first exascale supercomputer now fully open for scientific business, researchers can thank the early users who helped get the machine up to speed.

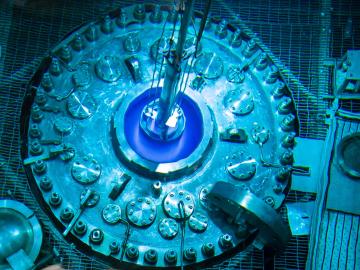

Creating energy the way the sun and stars do — through nuclear fusion — is one of the grand challenges facing science and technology. What’s easy for the sun and its billions of relatives turns out to be particularly difficult on Earth.

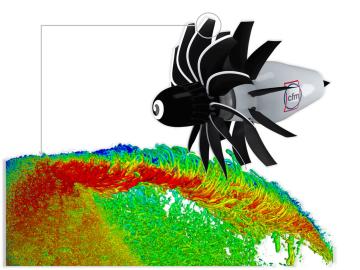

To support the development of a revolutionary new open fan engine architecture for the future of flight, GE Aerospace has run simulations using the world’s fastest supercomputer capable of crunching data in excess of exascale speed, or more than a quintillion calculations per second.

ORNL will team up with six of eight companies that are advancing designs and research and development for fusion power plants with the mission to achieve a pilot-scale demonstration of fusion within a decade.

Lawrence Livermore National Laboratory’s Lori Diachin will take over as director of the Department of Energy’s Exascale Computing Project on June 1, guiding the successful, multi-institutional high-performance computing effort through its final stages.

At the National Center for Computational Sciences, Ashley Barker enjoys one of the least complicated–sounding job titles at ORNL: section head of operations. But within that seemingly ordinary designation lurks a multitude of demanding roles as she oversees the complete user experience for NCCS computer systems.

As renewable sources of energy such as wind and sun power are being increasingly added to the country’s electrical grid, old-fashioned nuclear energy is also being primed for a resurgence.

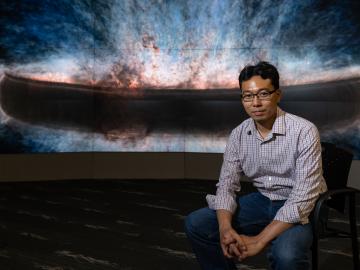

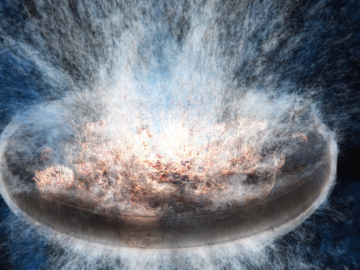

A trio of new and improved cosmological simulation codes was unveiled in a series of presentations at the annual April Meeting of the American Physical Society in Minneapolis.