Filter News

Area of Research

- (-) Biology and Environment (52)

- (-) Supercomputing (64)

- Advanced Manufacturing (3)

- Clean Energy (59)

- Computational Biology (1)

- Computer Science (1)

- Fusion and Fission (6)

- Fusion Energy (1)

- Materials (39)

- Materials for Computing (8)

- National Security (17)

- Neutron Science (16)

- Nuclear Science and Technology (2)

- Quantum information Science (2)

News Type

News Topics

- (-) Big Data (19)

- (-) Coronavirus (19)

- (-) Frontier (26)

- (-) Machine Learning (15)

- (-) Microscopy (16)

- (-) Polymers (2)

- (-) Sustainable Energy (24)

- 3-D Printing/Advanced Manufacturing (11)

- Advanced Reactors (1)

- Artificial Intelligence (36)

- Bioenergy (39)

- Biology (60)

- Biomedical (22)

- Biotechnology (12)

- Buildings (5)

- Chemical Sciences (13)

- Clean Water (8)

- Climate Change (40)

- Composites (4)

- Computer Science (83)

- Critical Materials (1)

- Cybersecurity (9)

- Decarbonization (19)

- Energy Storage (9)

- Environment (79)

- Exascale Computing (22)

- Fusion (1)

- Grid (4)

- High-Performance Computing (42)

- Hydropower (5)

- Isotopes (3)

- Materials (20)

- Materials Science (20)

- Mathematics (3)

- Mercury (6)

- Molten Salt (1)

- Nanotechnology (15)

- National Security (9)

- Net Zero (3)

- Neutron Science (16)

- Nuclear Energy (4)

- Partnerships (5)

- Physics (9)

- Quantum Computing (15)

- Quantum Science (20)

- Renewable Energy (1)

- Security (5)

- Simulation (20)

- Software (1)

- Space Exploration (2)

- Summit (39)

- Transformational Challenge Reactor (1)

- Transportation (6)

Media Contacts

As Frontier, the world’s first exascale supercomputer, was being assembled at the Oak Ridge Leadership Computing Facility in 2021, understanding its performance on mixed-precision calculations remained a difficult prospect.

Outside the high-performance computing, or HPC, community, exascale may seem more like fodder for science fiction than a powerful tool for scientific research. Yet, when seen through the lens of real-world applications, exascale computing goes from ethereal concept to tangible reality with exceptional benefits.

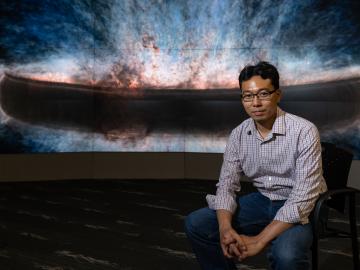

Madhavi Martin brings a physicist’s tools and perspective to biological and environmental research at the Department of Energy’s Oak Ridge National Laboratory, supporting advances in bioenergy, soil carbon storage and environmental monitoring, and even helping solve a murder mystery.

Mirko Musa spent his childhood zigzagging his bike along the Po River. The Po, Italy’s longest river, cuts through a lush valley of grain and vegetable fields, which look like a green and gold ocean spreading out from the river’s banks.

Wildfires are an ancient force shaping the environment, but they have grown in frequency, range and intensity in response to a changing climate. At ORNL, scientists are working on several fronts to better understand and predict these events and what they mean for the carbon cycle and biodiversity.

Wildfires have shaped the environment for millennia, but they are increasing in frequency, range and intensity in response to a hotter climate. The phenomenon is being incorporated into high-resolution simulations of the Earth’s climate by scientists at the Department of Energy’s Oak Ridge National Laboratory, with a mission to better understand and predict environmental change.

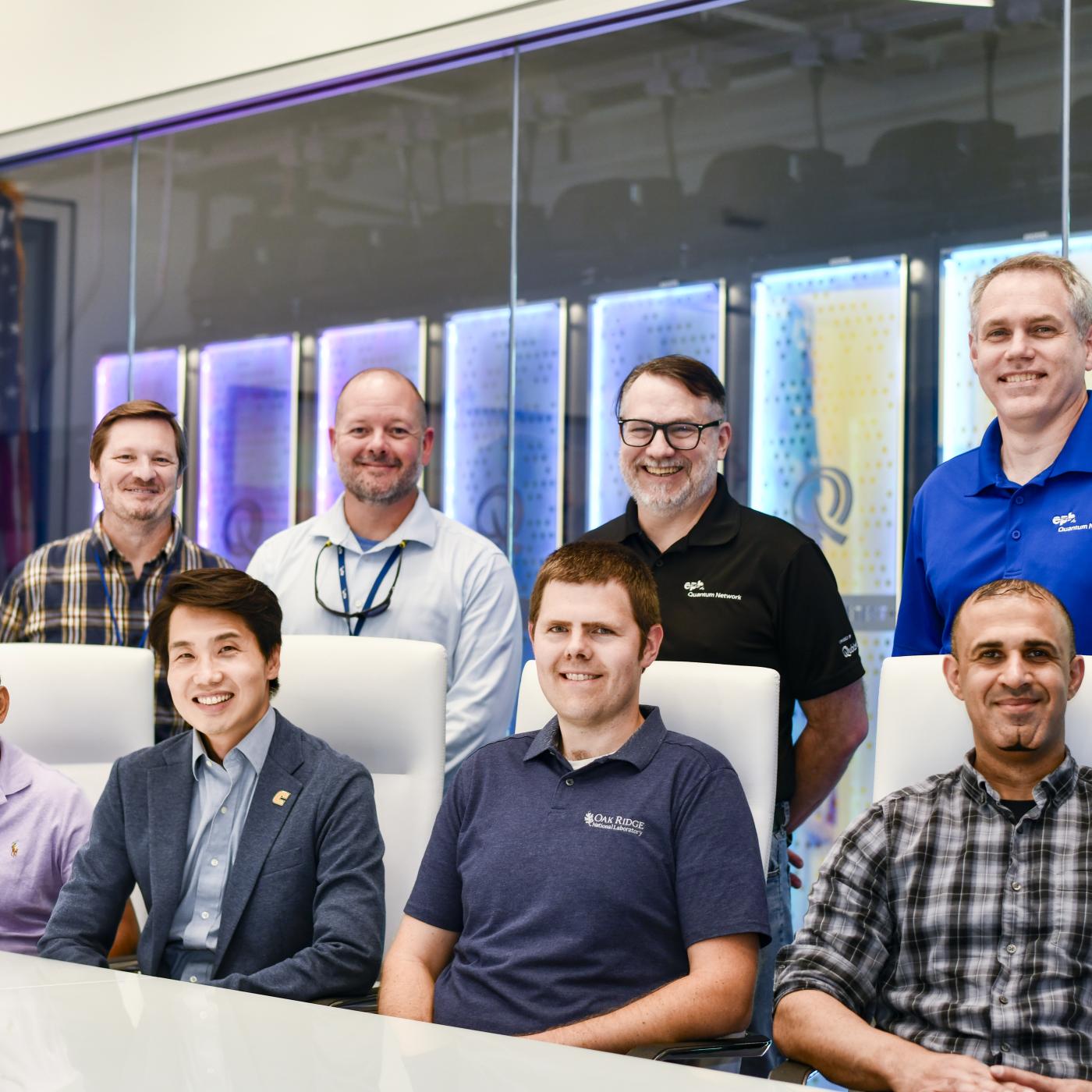

With the world’s first exascale supercomputer now fully open for scientific business, researchers can thank the early users who helped get the machine up to speed.

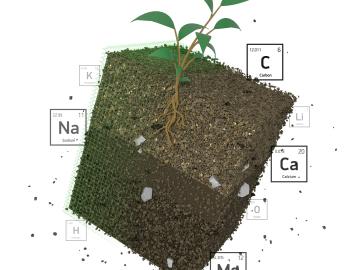

Oak Ridge National Laboratory researchers recently demonstrated use of a laser-based analytical method to accelerate understanding of critical plant and soil properties that affect bioenergy plant growth and soil carbon storage.

Scientist-inventors from ORNL will present seven new technologies during the Technology Innovation Showcase on Friday, July 14, from 8 a.m.–4 p.m. at the Joint Institute for Computational Sciences on ORNL’s campus.

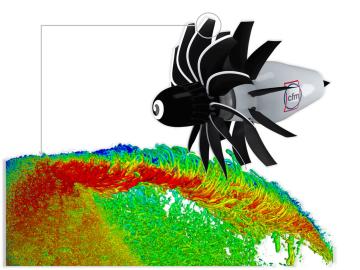

To support the development of a revolutionary new open fan engine architecture for the future of flight, GE Aerospace has run simulations using the world’s fastest supercomputer capable of crunching data in excess of exascale speed, or more than a quintillion calculations per second.