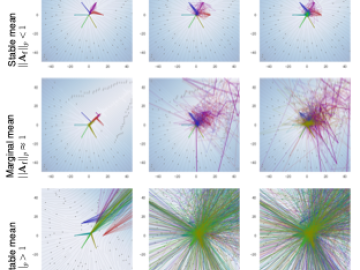

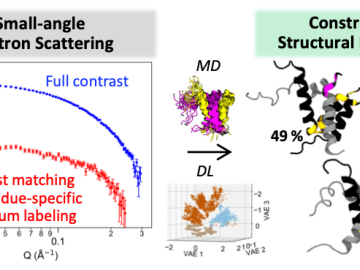

Computational scientists and neutron structural biologists from Oak Ridge National Laboratory developed an integrated workflow using small-angle neutron scattering (SANS), atomistic molecular dynamics (MD) simulation, and an autoencoder-based deep learn